Many companies will also customize generative AI on their own data to help improve branding and communication. Programming teams will use generative AI to enforce company-specific genrative ai best practices for writing and formatting more readable and consistent code. For example, business users could explore product marketing imagery using text descriptions.

“Deepfakes,” or images and videos that are created by AI and purport to be realistic but are not, have already arisen in media, entertainment, and politics. Heretofore, however, the creation of deepfakes required a considerable amount of computing skill. OpenAI has attempted to control fake images by “watermarking” each DALL-E 2 image with a distinctive symbol. More controls are likely to be required in the future, however — particularly as generative video creation becomes mainstream. DALL-E is an example of text-to-image generative AI that was released in January 2021 by OpenAI.

By utilizing real-world information, it can create simulations that provide predictive insights into product performance and process outcomes. To demonstrate the real impact of AI, we have also integrated real-world generative AI examples that are already leaving a profound imprint on people’s work processes. Such progress builds on itself, a dynamic on full display in 2022 and 2023.

Moreover, these tools can also help create text-based reports and perform complex business calculations. Generative AI can help auditors to spot and flag audit abnormalities for further examination. When incorporated with human evaluation correctly, generative AI tools can be useful in identifying potential fraud and enhancing internal audit functions.

This can help you create targeted content that resonates with your audience, which can lead to higher engagement and conversion rates. By using machine learning algorithms, manufacturers can predict equipment failures and maintain their equipment proactively. These models can be trained on data from the machines themselves, like temperature, vibration, sound, etc. As these models learn this data management, they can generate predictions about potential failures, allowing for preventative maintenance and reducing downtime.

The impact of doing so can be wide-ranging and severe, from perpetuating stereotypes, hate speech and harmful ideologies to damaging personal and professional reputation and the threat of legal and financial repercussions. It has even been suggested that the misuse or mismanagement of generative AI could put national security at risk. VAEs leverage two networks to interpret and generate data — in this case, it’s an encoder and a decoder. The encoder takes the input data and compresses it into a simplified format. The decoder then takes this compressed information and reconstructs it into something new that resembles the original data, but isn’t entirely the same.

Founder of the DevEducation project

It made headlines in February 2023 after it shared incorrect information in a demo video, causing parent company Alphabet (GOOG, GOOGL) shares to plummet around 9% in the days following the announcement. Here are some of the most popular recent genrative ai interfaces. To be part of this incredibly exciting era of AI, join our diverse team of data scientists and AI experts—and start revolutionizing what’s possible for business and society. When enabled by the cloud and driven by data, AI is the differentiator that powers business growth.

What are Machine Learning Models? Types and Examples.

Posted: Mon, 28 Aug 2023 21:51:29 GMT [source]

DALL-E 2 and other image generation tools are already being used for advertising. Nestle used an AI-enhanced version of a Vermeer painting to help sell one of its yogurt brands. Mattel is using the technology to generate images for toy design and marketing.

Whenever there is user input/prompt, the generator will generate new data, and the discriminator will analyze it for authenticity. Feedback from the discriminator enables algorithms to adjust the generator parameters and refine the output. In other words, they try to understand the structure of the data and use that understanding to generate new data similar to the original data. Generative models differ from discriminating models designed to classify or label text based on pre-defined categories. Discriminating models are often used in areas like facial recognition, where they are trained to recognize specific features or characteristics of a person’s face.

Generative AI Isn’t Ready to Provide Bank Customer Service.

Posted: Tue, 29 Aug 2023 04:30:37 GMT [source]

Well, for an example, the italicized text above was written by GPT-3, a “large language model” (LLM) created by OpenAI, in response to the first sentence, which we wrote. GPT-3’s text reflects the strengths and weaknesses of most AI-generated content. First, it is sensitive to the prompts fed into it; we tried several alternative prompts before settling on that sentence. Second, the system writes reasonably well; there are no grammatical mistakes, and the word choice is appropriate.

Some companies are exploring the idea of LLM-based knowledge management in conjunction with the leading providers of commercial LLMs. It seems likely that users of such systems will need training or assistance in creating effective prompts, and that the knowledge outputs of the LLMs might still need editing or review before being applied. Assuming that such issues are addressed, however, LLMs could rekindle the field of knowledge management and allow it to scale much more effectively. But once a generative model is trained, it can be “fine-tuned” for a particular content domain with much less data.

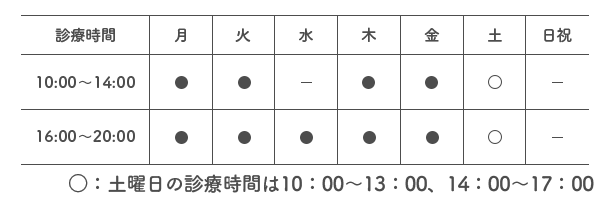

SMiLE 整骨院

| 診療時間 |  |

|---|---|

| 住所 | 〒112-0006 東京都文京区小日向4-5-10 小日向サニーハイツ201 |

| アクセス | 東京メトロ丸の内線「茗荷谷」駅 徒歩2分 |